Naive Bayes Exercise-程序员宅基地

技术标签: Naive Bayes Machine Learning

本文将通过朴素贝叶斯解决邮件的分类问题。理论文献参考:http://openclassroom.stanford.edu/MainFolder/DocumentPage.php?course=MachineLearning&doc=exercises/ex6/ex6.html。文章将分为三个部分,首先介绍一下基本的概率概念,然后对给出了特征的邮件进行分类,最后给出邮件特征的提取代码。

概率知识

表示在一系列事件(数据)中发生y 的概率。

表示在一系列事件(数据)中发生y 的概率。

表示给定x 后,发生y 的概率。

表示给定x 后,发生y 的概率。

称之为先验概率,即不需要考虑x 的影响;

称之为先验概率,即不需要考虑x 的影响;

表示给定x 后,发生y 的概率,故称之为y 的后验概率。

表示给定x 后,发生y 的概率,故称之为y 的后验概率。

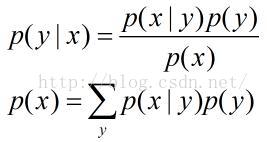

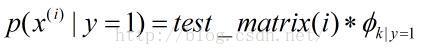

表示在一封垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封非垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封非垃圾邮件中给定单词是字典中第k个单词的概率;

表示训练邮件中垃圾邮件所占概率;

表示训练邮件中垃圾邮件所占概率;

个单词,字典中包含

个单词,字典中包含

个单词。

个单词。

邮件分类

clc, clear;

% Load the features

numTrainDocs = 700;

numTokens = 2500;

M = dlmread('train-features.txt', ' ');

spmatrix = sparse(M(:,1), M(:,2), M(:,3), numTrainDocs, numTokens); % size: numTrainDocs * numTokens

% row: document numbers

% col: words in dirctionary

% element: occurrences

train_matrix = full(spmatrix);

% Load the labels for training set

train_labels = dlmread('train-labels.txt'); % i-th label corresponds to the i-th row in train_matrix

% Train

% 1. Calculate \phi_y

phi_y = sum(train_labels) ./ length(train_labels);

% 2. Calculate each \phi_k|y=1 for each dictionary word and store the all

% result in a vector

spam_index = find(1 == train_labels);

nonspam_index = find(0 == train_labels);

spam_sum = sum(train_matrix(spam_index, :));

nonspam_sum = sum(train_matrix(nonspam_index, :));

phi_k_y1 = (spam_sum + 1) ./ (sum(spam_sum) + numTokens);

phi_k_y0 = (nonspam_sum + 1) ./ (sum(nonspam_sum) + numTokens);

% Test set

test_features = dlmread('test-features.txt');

spmatrix = sparse(test_features(:,1), test_features(:,2),test_features(:,3));

test_matrix = full(spmatrix);

numTestDocs = size(test_matrix, 1);

% Calculate probability

prob_spam = log(test_matrix * phi_k_y1') + log(phi_y);

prob_nonspam = log(test_matrix * phi_k_y0') + log(1 - phi_y);

output = prob_spam > prob_nonspam;

% Read the correct labels of the test set

test_labels = dlmread('test-labels.txt');

% Compute the error on the test set

% A document is misclassified if it's predicted label is different from

% the actual label, so count the number of 1's from an exclusive "or"

numdocs_wrong = sum(xor(output, test_labels))

%Print out error statistics on the test set

error = numdocs_wrong/numTestDocs<pre name="code" class="plain">log_a = test_matrix*(log(prob_tokens_spam))' + log(prob_spam);

log_b = test_matrix*(log(prob_tokens_nonspam))'+ log(1 - prob_spam);  ,即对于每一个特征单词求总的概率

,即对于每一个特征单词求总的概率

log_a = log(test_matrix * prob_tokens_spam') + log(prob_spam);

log_b = log(test_matrix * prob_tokens_nonspam')+ log(1 - prob_spam); 特征单词提取

clc;clear

% This file extracts features of emails for judging whether an email is a

% spam or not.

%% Read all text files in cell variable 'data'

data = cell(0);

directory = dir('.');

numberDirect = length(directory);

for n = 3 : numberDirect

files = dir(directory(n).name);

numberFiles = length(files);

for i = 3 : numberFiles

% Be careful the path

fid = fopen(['.\', directory(n).name, '\', files(i).name]);

if (-1 == fid)

fclose(fid);

continue;

end

dataTemp = textscan(fid, '%s', '\n');

fclose(fid);

data = [data; dataTemp{1, 1}];

end

end

%% Sort the data by alphabet.

data = sort(data);

% Count occurrences and delete duplicate words and store in a struct variable.

numberStrings = length(data);

words = struct('strings', {}, 'occurrences', 0);

numberFeature = 1;

occurrences = 1;

for i = 1 : numberStrings - 1

if (strcmp(char(data(i)), char(data(i + 1))))

occurrences = occurrences + 1;

else

words(numberFeature).strings = char(data(i));

words(numberFeature).occurrences = occurrences;

numberFeature = numberFeature + 1;

occurrences = 1;

end

end

words = struct2cell(words);

%% This is only for testing, or you can use

% 'sortrows(cell2mat(words(2, 1, :)))' for getting the 2500 most words.

orders = ones(numberFeature - 1, 1);

for i = 2 : numberFeature - 1

orders(i) = orders(i) + orders(i - 1);

end

features_number = cell2mat(words(2, 1, :));

features_numbers = [features_number(:), orders];

%% Get the 2500 most words to generate dictionary

features_numbers = sortrows(features_numbers);

directionary = words(:, :, features_numbers(:, 2));

directionary = directionary(1, 1, end - 2500 : end - 1);

directionary = sort(directionary(:));

%% calculate features in all folders for trainset and testset

for n = 3 : numberDirect

files = dir(directory(n).name);

numberFiles = length(files);

for i = 3 : numberFiles

fid = fopen(['.\', directory(n).name, '\', files(i).name]);

if (-1 == fid)

fclose(fid);

continue;

end

dataTemp = textscan(fid, '%s', '\n');

fclose(fid);

data = dataTemp{1,1};

feature = find_indexandcount(data, directionary);

docnumber = (i - 2) * ones(size(feature, 1), 1);

featrues = [docnumber, feature];

if (3 == i)

dlmwrite(['.\', directory(n).name, '\', 'features.txt'], featrues, 'delimiter', ' ');

else

dlmwrite(['.\', directory(n).name, '\', 'features.txt'], featrues, '-append', 'delimiter', ' ');

end

end

endfunction result = find_indexandcount(email, dictionary)

% This function computes words' index and count in an email by a dictionary.

numberStrings = length(email);

numberWords = length(dictionary);

count = zeros(length(dictionary), 1);

for i = 1 : numberStrings

count = count + strcmp(email(i), dictionary);

end

% Record order sequence

orders = ones(numberWords, 1);

for i = 2 : length(dictionary) - 1

orders(i) = orders(i) + orders(i - 1);

end

result = sortrows([orders, count], 2);

% Find words which appear more than 1 times

reserve = find(result(:, 2));

result = result(reserve, :);

end% train.m

% Exercise 6: Naive Bayes text classifier

clear all; close all; clc

% store the number of training examples

numTrainDocs = 350;

% store the dictionary size

numTokens = 2500;

% read the features matrix

M0 = dlmread('nonspam_features_train.txt', ' ');

M1 = dlmread('spam_features_train.txt', ' ');

nonspmatrix = sparse(M0(:,1), M0(:,2), M0(:,3), numTrainDocs, numTokens);

nonspamtrain_matrix = full(nonspmatrix);

spmatrix = sparse(M1(:,1), M1(:,2), M1(:,3), numTrainDocs, numTokens);

spamtrain_matrix = full(spmatrix);

% Calculate probability of spam

phi_y = size(spamtrain_matrix, 1) / (size(spamtrain_matrix, 1) + size(nonspamtrain_matrix, 1));

spam_sum = sum(spamtrain_matrix);

nonspam_sum = sum(nonspamtrain_matrix);

% the k-th entry of prob_tokens_spam represents phi_(k|y=1)

phi_k_y1 = (spam_sum + 1) ./ (sum(spam_sum) + numTokens);

% the k-th entry of prob_tokens_nonspam represents phi_(k|y=0)

phi_k_y0 = (nonspam_sum + 1) ./ (sum(nonspam_sum) + numTokens);

% Test set

test_features_spam = dlmread('spam_features_test.txt',' ');

test_features_nonspam = dlmread('nonspam_features_test.txt',' ');

numTestDocs = max(test_features_spam(:,1));

spmatrix = sparse(test_features_spam(:,1), test_features_spam(:,2),test_features_spam(:,3),numTestDocs, numTokens);

nonspmatrix = sparse(test_features_nonspam(:,1), test_features_nonspam(:,2),test_features_nonspam(:,3),numTestDocs,numTokens);

test_matrix = [full(spmatrix); full(nonspmatrix)];

% Calculate probability

prob_spam = log(test_matrix * phi_k_y1') + log(phi_y);

prob_nonspam = log(test_matrix * phi_k_y0') + log(1 - phi_y);

output = prob_spam > prob_nonspam;

% Compute the error on the test set

test_labels = [ones(numTestDocs, 1); zeros(numTestDocs, 1)];

wrong_numdocs = sum(xor(output, test_labels))

%Print out error statistics on the test set

error_prob = wrong_numdocs/numTestDocs

其结果如下:(分类错误的个数及概率)

智能推荐

class和struct的区别-程序员宅基地

文章浏览阅读101次。4.class可以有⽆参的构造函数,struct不可以,必须是有参的构造函数,⽽且在有参的构造函数必须初始。2.Struct适⽤于作为经常使⽤的⼀些数据组合成的新类型,表示诸如点、矩形等主要⽤来存储数据的轻量。1.Class⽐较适合⼤的和复杂的数据,表现抽象和多级别的对象层次时。2.class允许继承、被继承,struct不允许,只能继承接⼝。3.Struct有性能优势,Class有⾯向对象的扩展优势。3.class可以初始化变量,struct不可以。1.class是引⽤类型,struct是值类型。

android使用json后闪退,应用闪退问题:从json信息的解析开始就会闪退-程序员宅基地

文章浏览阅读586次。想实现的功能是点击顶部按钮之后按关键字进行搜索,已经可以从服务器收到反馈的json信息,但从json信息的解析开始就会闪退,加载listview也不知道行不行public abstract class loadlistview{public ListView plv;public String js;public int listlength;public int listvisit;public..._rton转json为什么会闪退

如何使用wordnet词典,得到英文句子的同义句_get_synonyms wordnet-程序员宅基地

文章浏览阅读219次。如何使用wordnet词典,得到英文句子的同义句_get_synonyms wordnet

系统项目报表导出功能开发_积木报表 多线程-程序员宅基地

文章浏览阅读521次。系统项目报表导出 导出任务队列表 + 定时扫描 + 多线程_积木报表 多线程

ajax 如何从服务器上获取数据?_ajax 获取http数据-程序员宅基地

文章浏览阅读1.1k次,点赞9次,收藏9次。使用AJAX技术的好处之一是它能够提供更好的用户体验,因为它允许在不重新加载整个页面的情况下更新网页的某一部分。另外,AJAX还使得开发人员能够创建更复杂、更动态的Web应用程序,因为它们可以在后台与服务器进行通信,而不需要打断用户的浏览体验。在Web开发中,AJAX(Asynchronous JavaScript and XML)是一种常用的技术,用于在不重新加载整个页面的情况下,从服务器获取数据并更新网页的某一部分。使用AJAX,你可以创建异步请求,从而提供更快的响应和更好的用户体验。_ajax 获取http数据

Linux图形终端与字符终端-程序员宅基地

文章浏览阅读2.8k次。登录退出、修改密码、关机重启_字符终端

随便推点

Python与Arduino绘制超声波雷达扫描_超声波扫描建模 python库-程序员宅基地

文章浏览阅读3.8k次,点赞3次,收藏51次。前段时间看到一位发烧友制作的超声波雷达扫描神器,用到了Arduino和Processing,可惜啊,我不会Processing更看不懂人家的程序,咋办呢?嘿嘿,所以我就换了个思路解决,因为我会一点Python啊,那就动手吧!在做这个案例之前先要搞明白一个问题:怎么将Arduino通过超声波检测到的距离反馈到Python端?这个嘛,我首先想到了串行通信接口。没错!就是串口。只要Arduino将数据发送给COM口,然后Python能从COM口读取到这个数据就可以啦!我先写了一个测试程序试了一下,OK!搞定_超声波扫描建模 python库

凯撒加密方法介绍及实例说明-程序员宅基地

文章浏览阅读4.2k次。端—端加密指信息由发送端自动加密,并且由TCP/IP进行数据包封装,然后作为不可阅读和不可识别的数据穿过互联网,当这些信息到达目的地,将被自动重组、解密,而成为可读的数据。不可逆加密算法的特征是加密过程中不需要使用密钥,输入明文后由系统直接经过加密算法处理成密文,这种加密后的数据是无法被解密的,只有重新输入明文,并再次经过同样不可逆的加密算法处理,得到相同的加密密文并被系统重新识别后,才能真正解密。2.使用时,加密者查找明文字母表中需要加密的消息中的每一个字母所在位置,并且写下密文字母表中对应的字母。_凯撒加密

工控协议--cip--协议解析基本记录_cip协议embedded_service_error-程序员宅基地

文章浏览阅读5.7k次。CIP报文解析常用到的几个字段:普通类型服务类型:[0x00], CIP对象:[0x02 Message Router], ioi segments:[XX]PCCC(带cmd和func)服务类型:[0x00], CIP对象:[0x02 Message Router], cmd:[0x101], fnc:[0x101]..._cip协议embedded_service_error

如何在vs2019及以后版本(如vs2022)上添加 添加ActiveX控件中的MFC类_vs添加mfc库-程序员宅基地

文章浏览阅读2.4k次,点赞9次,收藏13次。有时候我们在MFC项目开发过程中,需要用到一些微软已经提供的功能,如VC++使用EXCEL功能,这时候我们就能直接通过VS2019到如EXCEL.EXE方式,生成对应的OLE头文件,然后直接使用功能,那么,我们上篇文章中介绍了vs2017及以前的版本如何来添加。但由于微软某些方面考虑,这种方式已被放弃。从上图中可以看出,这一功能,在从vs2017版本15.9开始,后续版本已经删除了此功能。那么我们如果仍需要此功能,我们如何在新版本中添加呢。_vs添加mfc库

frame_size (1536) was not respected for a non-last frame_frame_size (1024) was not respected for a non-last-程序员宅基地

文章浏览阅读785次。用ac3编码,执行编码函数时报错入如下:[ac3 @ 0x7fed7800f200] frame_size (1536) was not respected for anon-last frame (avcodec_encode_audio2)用ac3编码时每次送入编码器的音频采样数应该是1536个采样,不然就会报上述错误。这个数字并非刻意固定,而是跟ac3内部的编码算法原理相关。全网找不到,国内音视频之路还有很长的路,音视频人一起加油吧~......_frame_size (1024) was not respected for a non-last frame

Android移动应用开发入门_在安卓移动应用开发中要在活动类文件中声迷你一个复选框变量-程序员宅基地

文章浏览阅读230次,点赞2次,收藏2次。创建Android应用程序一个项目里面可以有很多模块,而每一个模块就对应了一个应用程序。项目结构介绍_在安卓移动应用开发中要在活动类文件中声迷你一个复选框变量