NLP实践系列:1、探索NPL数据集_npl的评价指标-程序员宅基地

1、数据集来源

- 中文数据集:THUCNews

- THUCNews数据子集:https://pan.baidu.com/s/1hugrfRu 密码:qfud

- 英文数据集:IMDB数据集 Sentiment Analysis

2、IMDB数据探索

# 测试依赖包,TensorFlow版本

import tensorflow as tf

from tensorflow import keras

import numpy as np

print(tf.__version__)

1.12.0

# 下载数据集

imdb = keras.datasets.imdb

(train_data, train_labels), (test_data, test_labels) = imdb.load_data(num_words=10000)

探索测试数据集

print("Training entries: {}, labels: {}".format(len(train_data), len(train_labels)))

Training entries: 25000, labels: 25000

print(train_data[0])

[1, 14, 22, 16, 43, 530, 973, 1622, 1385, 65, 458, 4468, 66, 3941, 4, 173, 36, 256, 5, 25, 100, 43, 838, 112, 50, 670, 2, 9, 35, 480, 284, 5, 150, 4, 172, 112, 167, 2, 336, 385, 39, 4, 172, 4536, 1111, 17, 546, 38, 13, 447, 4, 192, 50, 16, 6, 147, 2025, 19, 14, 22, 4, 1920, 4613, 469, 4, 22, 71, 87, 12, 16, 43, 530, 38, 76, 15, 13, 1247, 4, 22, 17, 515, 17, 12, 16, 626, 18, 2, 5, 62, 386, 12, 8, 316, 8, 106, 5, 4, 2223, 5244, 16, 480, 66, 3785, 33, 4, 130, 12, 16, 38, 619, 5, 25, 124, 51, 36, 135, 48, 25, 1415, 33, 6, 22, 12, 215, 28, 77, 52, 5, 14, 407, 16, 82, 2, 8, 4, 107, 117, 5952, 15, 256, 4, 2, 7, 3766, 5, 723, 36, 71, 43, 530, 476, 26, 400, 317, 46, 7, 4, 2, 1029, 13, 104, 88, 4, 381, 15, 297, 98, 32, 2071, 56, 26, 141, 6, 194, 7486, 18, 4, 226, 22, 21, 134, 476, 26, 480, 5, 144, 30, 5535, 18, 51, 36, 28, 224, 92, 25, 104, 4, 226, 65, 16, 38, 1334, 88, 12, 16, 283, 5, 16, 4472, 113, 103, 32, 15, 16, 5345, 19, 178, 32]

len(train_data[0]), len(train_data[1])

(218, 189)

# A dictionary mapping words to an integer index

word_index = imdb.get_word_index()

# The first indices are reserved

word_index = {

k:(v+3) for k,v in word_index.items()}

word_index["<PAD>"] = 0

word_index["<START>"] = 1

word_index["<UNK>"] = 2 # unknown

word_index["<UNUSED>"] = 3

reverse_word_index = dict([(value, key) for (key, value) in word_index.items()])

def decode_review(text):

return ' '.join([reverse_word_index.get(i, '?') for i in text])

decode_review(train_data[0])

"<START> this film was just brilliant casting location scenery story direction everyone's really suited the part they played and you could just imagine being there robert <UNK> is an amazing actor and now the same being director <UNK> father came from the same scottish island as myself so i loved the fact there was a real connection with this film the witty remarks throughout the film were great it was just brilliant so much that i bought the film as soon as it was released for <UNK> and would recommend it to everyone to watch and the fly fishing was amazing really cried at the end it was so sad and you know what they say if you cry at a film it must have been good and this definitely was also <UNK> to the two little boy's that played the <UNK> of norman and paul they were just brilliant children are often left out of the <UNK> list i think because the stars that play them all grown up are such a big profile for the whole film but these children are amazing and should be praised for what they have done don't you think the whole story was so lovely because it was true and was someone's life after all that was shared with us all"

使用 pad_sequences 函数将长度标准化:

train_data = keras.preprocessing.sequence.pad_sequences(train_data,

value=word_index["<PAD>"],

padding='post',

maxlen=256)

test_data = keras.preprocessing.sequence.pad_sequences(test_data,

value=word_index["<PAD>"],

padding='post',

maxlen=256)

len(train_data[0]), len(train_data[1])

(256, 256)

print(train_data[0])

[ 1 14 22 16 43 530 973 1622 1385 65 458 4468 66 3941 4

173 36 256 5 25 100 43 838 112 50 670 2 9 35 480

284 5 150 4 172 112 167 2 336 385 39 4 172 4536 1111

17 546 38 13 447 4 192 50 16 6 147 2025 19 14 22

4 1920 4613 469 4 22 71 87 12 16 43 530 38 76 15

13 1247 4 22 17 515 17 12 16 626 18 2 5 62 386

12 8 316 8 106 5 4 2223 5244 16 480 66 3785 33 4

130 12 16 38 619 5 25 124 51 36 135 48 25 1415 33

6 22 12 215 28 77 52 5 14 407 16 82 2 8 4

107 117 5952 15 256 4 2 7 3766 5 723 36 71 43 530

476 26 400 317 46 7 4 2 1029 13 104 88 4 381 15

297 98 32 2071 56 26 141 6 194 7486 18 4 226 22 21

134 476 26 480 5 144 30 5535 18 51 36 28 224 92 25

104 4 226 65 16 38 1334 88 12 16 283 5 16 4472 113

103 32 15 16 5345 19 178 32 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0]

构建模型

# input shape is the vocabulary count used for the movie reviews (10,000 words)

vocab_size = 10000

model = keras.Sequential()

model.add(keras.layers.Embedding(vocab_size, 16))

model.add(keras.layers.GlobalAveragePooling1D())

model.add(keras.layers.Dense(16, activation=tf.nn.relu))

model.add(keras.layers.Dense(1, activation=tf.nn.sigmoid))

model.summary()

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, None, 16) 160000

_________________________________________________________________

global_average_pooling1d (Gl (None, 16) 0

_________________________________________________________________

dense (Dense) (None, 16) 272

_________________________________________________________________

dense_1 (Dense) (None, 1) 17

=================================================================

Total params: 160,289

Trainable params: 160,289

Non-trainable params: 0

_________________________________________________________________

使用 Keras Sequential 顺序模型,它由多个网络层线性堆叠,上面的模型,结构如下:

1、第一层是 Embedding 层。该层会在整数编码的词汇表中查找每个字词-索引的嵌入向量。模型在接受训练时会学习这些向量。这些向量会向输出数组添加一个维度。生成的维度为:(batch, sequence, embedding)。

2、接下来,一个 GlobalAveragePooling1D 层通过对序列维度求平均值,针对每个样本返回一个长度固定的输出向量。这样,模型便能够以尽可能简单的方式处理各种长度的输入。

3、该长度固定的输出向量会传入一个全连接 (Dense) 层(包含 16 个隐藏单元)。

4、最后一层与单个输出节点密集连接。应用 sigmoid 激活函数后,结果是介于 0 到 1 之间的浮点值,表示概率或置信水平。

model.compile(optimizer=tf.train.AdamOptimizer(),

loss='binary_crossentropy',

metrics=['accuracy'])

x_val = train_data[:10000]

partial_x_train = train_data[10000:]

y_val = train_labels[:10000]

partial_y_train = train_labels[10000:]

history = model.fit(partial_x_train,

partial_y_train,

epochs=40,

batch_size=512,

validation_data=(x_val, y_val),

verbose=1)

Train on 15000 samples, validate on 10000 samples

Epoch 1/40

15000/15000 [==============================] - 9s 622us/step - loss: 0.6918 - acc: 0.6397 - val_loss: 0.6898 - val_acc: 0.7141

Epoch 2/40

15000/15000 [==============================] - 2s 140us/step - loss: 0.6854 - acc: 0.6933 - val_loss: 0.6802 - val_acc: 0.7552

Epoch 3/40

15000/15000 [==============================] - 2s 152us/step - loss: 0.6703 - acc: 0.7735 - val_loss: 0.6611 - val_acc: 0.7557

Epoch 4/40

15000/15000 [==============================] - 2s 129us/step - loss: 0.6436 - acc: 0.7826 - val_loss: 0.6312 - val_acc: 0.7570

Epoch 5/40

15000/15000 [==============================] - 1s 94us/step - loss: 0.6048 - acc: 0.8029 - val_loss: 0.5909 - val_acc: 0.7942

Epoch 6/40

15000/15000 [==============================] - 1s 97us/step - loss: 0.5574 - acc: 0.8209 - val_loss: 0.5458 - val_acc: 0.8107

Epoch 7/40

15000/15000 [==============================] - 1s 92us/step - loss: 0.5065 - acc: 0.8401 - val_loss: 0.5007 - val_acc: 0.8279

Epoch 8/40

15000/15000 [==============================] - 2s 130us/step - loss: 0.4583 - acc: 0.8541 - val_loss: 0.4591 - val_acc: 0.8370

Epoch 9/40

15000/15000 [==============================] - 2s 114us/step - loss: 0.4141 - acc: 0.8675 - val_loss: 0.4234 - val_acc: 0.8490

Epoch 10/40

15000/15000 [==============================] - 2s 104us/step - loss: 0.3768 - acc: 0.8791 - val_loss: 0.3948 - val_acc: 0.8560

Epoch 11/40

15000/15000 [==============================] - 2s 100us/step - loss: 0.3454 - acc: 0.8875 - val_loss: 0.3730 - val_acc: 0.8608

Epoch 12/40

15000/15000 [==============================] - 1s 94us/step - loss: 0.3198 - acc: 0.8955 - val_loss: 0.3536 - val_acc: 0.8671

Epoch 13/40

15000/15000 [==============================] - 1s 89us/step - loss: 0.2979 - acc: 0.9001 - val_loss: 0.3394 - val_acc: 0.8709

Epoch 14/40

15000/15000 [==============================] - 2s 118us/step - loss: 0.2791 - acc: 0.9055 - val_loss: 0.3280 - val_acc: 0.8732

Epoch 15/40

15000/15000 [==============================] - 1s 95us/step - loss: 0.2625 - acc: 0.9105 - val_loss: 0.3181 - val_acc: 0.8780

Epoch 16/40

15000/15000 [==============================] - 1s 81us/step - loss: 0.2481 - acc: 0.9155 - val_loss: 0.3104 - val_acc: 0.8795

Epoch 17/40

15000/15000 [==============================] - 1s 88us/step - loss: 0.2354 - acc: 0.9192 - val_loss: 0.3059 - val_acc: 0.8775

Epoch 18/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.2230 - acc: 0.9235 - val_loss: 0.2996 - val_acc: 0.8810

Epoch 19/40

15000/15000 [==============================] - 2s 120us/step - loss: 0.2126 - acc: 0.9272 - val_loss: 0.2955 - val_acc: 0.8823

Epoch 20/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.2021 - acc: 0.9303 - val_loss: 0.2918 - val_acc: 0.8840

Epoch 21/40

15000/15000 [==============================] - 1s 89us/step - loss: 0.1927 - acc: 0.9350 - val_loss: 0.2891 - val_acc: 0.8852

Epoch 22/40

15000/15000 [==============================] - 1s 89us/step - loss: 0.1841 - acc: 0.9386 - val_loss: 0.2878 - val_acc: 0.8839

Epoch 23/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.1761 - acc: 0.9420 - val_loss: 0.2867 - val_acc: 0.8857

Epoch 24/40

15000/15000 [==============================] - 1s 90us/step - loss: 0.1685 - acc: 0.9465 - val_loss: 0.2853 - val_acc: 0.8846

Epoch 25/40

15000/15000 [==============================] - 2s 115us/step - loss: 0.1613 - acc: 0.9487 - val_loss: 0.2846 - val_acc: 0.8855

Epoch 26/40

15000/15000 [==============================] - 2s 106us/step - loss: 0.1547 - acc: 0.9514 - val_loss: 0.2848 - val_acc: 0.8863

Epoch 27/40

15000/15000 [==============================] - 1s 81us/step - loss: 0.1480 - acc: 0.9543 - val_loss: 0.2856 - val_acc: 0.8863

Epoch 28/40

15000/15000 [==============================] - 1s 86us/step - loss: 0.1421 - acc: 0.9567 - val_loss: 0.2855 - val_acc: 0.8860

Epoch 29/40

15000/15000 [==============================] - 1s 84us/step - loss: 0.1361 - acc: 0.9596 - val_loss: 0.2868 - val_acc: 0.8872

Epoch 30/40

15000/15000 [==============================] - 1s 97us/step - loss: 0.1309 - acc: 0.9609 - val_loss: 0.2891 - val_acc: 0.8860

Epoch 31/40

15000/15000 [==============================] - 2s 111us/step - loss: 0.1258 - acc: 0.9631 - val_loss: 0.2920 - val_acc: 0.8843

Epoch 32/40

15000/15000 [==============================] - 1s 88us/step - loss: 0.1208 - acc: 0.9655 - val_loss: 0.2917 - val_acc: 0.8853

Epoch 33/40

15000/15000 [==============================] - 1s 84us/step - loss: 0.1160 - acc: 0.9684 - val_loss: 0.2937 - val_acc: 0.8860

Epoch 34/40

15000/15000 [==============================] - 1s 90us/step - loss: 0.1113 - acc: 0.9699 - val_loss: 0.2965 - val_acc: 0.8843

Epoch 35/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.1069 - acc: 0.9711 - val_loss: 0.2994 - val_acc: 0.8842

Epoch 36/40

15000/15000 [==============================] - 1s 81us/step - loss: 0.1028 - acc: 0.9725 - val_loss: 0.3022 - val_acc: 0.8842

Epoch 37/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.0994 - acc: 0.9735 - val_loss: 0.3044 - val_acc: 0.8845

Epoch 38/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.0948 - acc: 0.9753 - val_loss: 0.3076 - val_acc: 0.8835

Epoch 39/40

15000/15000 [==============================] - 1s 83us/step - loss: 0.0911 - acc: 0.9766 - val_loss: 0.3112 - val_acc: 0.8839

Epoch 40/40

15000/15000 [==============================] - 1s 87us/step - loss: 0.0878 - acc: 0.9775 - val_loss: 0.3158 - val_acc: 0.8826

results = model.evaluate(test_data, test_labels)

print(results)

25000/25000 [==============================] - 4s 144us/step

[0.3395507445144653, 0.86964]

history_dict = history.history

history_dict.keys()

dict_keys(['val_loss', 'val_acc', 'loss', 'acc'])

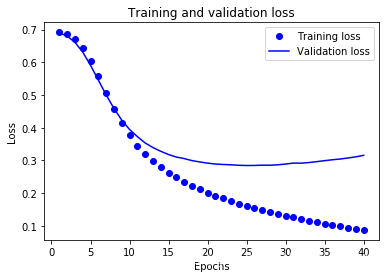

import matplotlib.pyplot as plt

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(1, len(acc) + 1)

# "bo" is for "blue dot"

plt.plot(epochs, loss, 'bo', label='Training loss')

# b is for "solid blue line"

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.show()

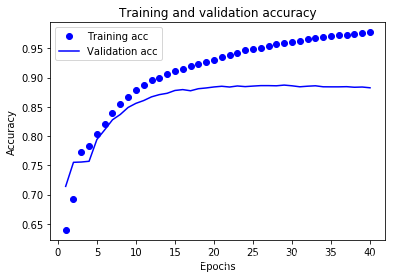

plt.clf() # clear figure

acc_values = history_dict['acc']

val_acc_values = history_dict['val_acc']

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.xlabel('Epochs')

plt.ylabel('Accuracy')

plt.legend()

plt.show()

3、准确率(Precision)、精确率(精准率)、召回率(Recall)概念

- 在机器学习、数据挖掘、推荐系统完成建模之后,需要对模型的效果做评价。

对于二分类问题,业内目前常常采用的评价指标有准确率(Precision)、召回率(Recall)等

TP——正类判别成正类

FN——正类判别成负类

FP——负类判别成正类

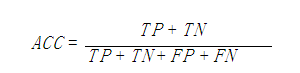

TN——负类判别成负类 - 准确率(Accuracy)

准确率(accuracy)计算公式为:

准确率是我们最常见的评价指标,而且很容易理解,就是被分对的样本数除以所有的样本数,通常来说,正确率越高,分类器越好。

准确率确实是一个很好很直观的评价指标,但是有时候准确率高并不能代表一个算法就好。比如某个地区某天地震的预测,假设我们有一堆的特征作为地震分类的属性,类别只有两个:0:不发生地震、1:发生地震。一个不加思考的分类器,对每一个测试用例都将类别划分为0,那那么它就可能达到99%的准确率,但真的地震来临时,这个分类器毫无察觉,这个分类带来的损失是巨大的。为什么99%的准确率的分类器却不是我们想要的,因为这里数据分布不均衡,类别1的数据太少,完全错分类别1依然可以达到很高的准确率却忽视了我们关注的东西。再举个例子说明下。在正负样本不平衡的情况下,准确率这个评价指标有很大的缺陷。比如在互联网广告里面,点击的数量是很少的,一般只有千分之几,如果用acc,即使全部预测成负类(不点击)acc也有 99% 以上,没有意义。因此,单纯靠准确率来评价一个算法模型是远远不够科学全面的。 - 精确率、精准率(Precision)

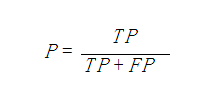

公式:

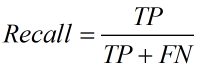

表示被分为正例的示例中实际为正例的比例。 - 召回率(recall)

公式:

召回率是覆盖面的度量,度量有多个正例被分为正例,recall=TP/(TP+FN)=TP/P=sensitive,可以看到召回率与灵敏度是一样的。

4、ROC曲线、AUC、和PR曲线了解

-

ROC曲线和PR(Precision - Recall)曲线皆为类别不平衡问题中常用的评估方法,二者既有相同也有不同点。

-

ROC曲线常用于二分类问题中的模型比较,主要表现为一种真正例率 (TPR) 和假正例率 (FPR) 的权衡。具体方法是在不同的分类阈值 (threshold) 设定下分别以TPR和FPR为纵、横轴作图。由ROC曲线的两个指标,TPR=TPP=TPTP+FNTPR=TPP=TPTP+FN,FPR=FPN=FPFP+TNFPR=FPN=FPFP+TN可以看出,当一个样本被分类器判为正例,若其本身是正例,则TPR增加;若其本身是负例,则FPR增加,因此ROC曲线可以看作是随着阈值的不断移动,所有样本中正例与负例之间的“对抗”。曲线越靠近左上角,意味着越多的正例优先于负例,模型的整体表现也就越好。

-

AUC 即ROC曲线围成的面积,可以理解为:从所有正例中随机选取一个样本A,再从所有负例中随机选取一个样本B,分类器将A判为正例的概率比将B判为正例的概率大的可能性。可以看到位于随机线上方的点(如图中的A点)被认为好于随机猜测。在这样的点上TPR总大于FPR,意为正例被判为正例的概率大于负例被判为正例的概率。

-

PR曲线展示的是Precision vs Recall的曲线,PR曲线与ROC曲线的相同点是都采用了TPR (Recall),都可以用AUC来衡量分类器的效果。不同点是ROC曲线使用了FPR,而PR曲线使用了Precision,因此PR曲线的两个指标都聚焦于正例。类别不平衡问题中由于主要关心正例,所以在此情况下PR曲线被广泛认为优于ROC曲线。

参考链接 : https://www.imooc.com/article/48072

https://tensorflow.google.cn/tutorials/keras/basic_text_classification

https://www.cnblogs.com/Zhi-Z/p/8728168.html

智能推荐

攻防世界_难度8_happy_puzzle_攻防世界困难模式攻略图文-程序员宅基地

文章浏览阅读645次。这个肯定是末尾的IDAT了,因为IDAT必须要满了才会开始一下个IDAT,这个明显就是末尾的IDAT了。,对应下面的create_head()代码。,对应下面的create_tail()代码。不要考虑爆破,我已经试了一下,太多情况了。题目来源:UNCTF。_攻防世界困难模式攻略图文

达梦数据库的导出(备份)、导入_达梦数据库导入导出-程序员宅基地

文章浏览阅读2.9k次,点赞3次,收藏10次。偶尔会用到,记录、分享。1. 数据库导出1.1 切换到dmdba用户su - dmdba1.2 进入达梦数据库安装路径的bin目录,执行导库操作 导出语句:./dexp cwy_init/[email protected]:5236 file=cwy_init.dmp log=cwy_init_exp.log 注释: cwy_init/init_123..._达梦数据库导入导出

js引入kindeditor富文本编辑器的使用_kindeditor.js-程序员宅基地

文章浏览阅读1.9k次。1. 在官网上下载KindEditor文件,可以删掉不需要要到的jsp,asp,asp.net和php文件夹。接着把文件夹放到项目文件目录下。2. 修改html文件,在页面引入js文件:<script type="text/javascript" src="./kindeditor/kindeditor-all.js"></script><script type="text/javascript" src="./kindeditor/lang/zh-CN.js"_kindeditor.js

STM32学习过程记录11——基于STM32G431CBU6硬件SPI+DMA的高效WS2812B控制方法-程序员宅基地

文章浏览阅读2.3k次,点赞6次,收藏14次。SPI的详情简介不必赘述。假设我们通过SPI发送0xAA,我们的数据线就会变为10101010,通过修改不同的内容,即可修改SPI中0和1的持续时间。比如0xF0即为前半周期为高电平,后半周期为低电平的状态。在SPI的通信模式中,CPHA配置会影响该实验,下图展示了不同采样位置的SPI时序图[1]。CPOL = 0,CPHA = 1:CLK空闲状态 = 低电平,数据在下降沿采样,并在上升沿移出CPOL = 0,CPHA = 0:CLK空闲状态 = 低电平,数据在上升沿采样,并在下降沿移出。_stm32g431cbu6

计算机网络-数据链路层_接收方收到链路层数据后,使用crc检验后,余数为0,说明链路层的传输时可靠传输-程序员宅基地

文章浏览阅读1.2k次,点赞2次,收藏8次。数据链路层习题自测问题1.数据链路(即逻辑链路)与链路(即物理链路)有何区别?“电路接通了”与”数据链路接通了”的区别何在?2.数据链路层中的链路控制包括哪些功能?试讨论数据链路层做成可靠的链路层有哪些优点和缺点。3.网络适配器的作用是什么?网络适配器工作在哪一层?4.数据链路层的三个基本问题(帧定界、透明传输和差错检测)为什么都必须加以解决?5.如果在数据链路层不进行帧定界,会发生什么问题?6.PPP协议的主要特点是什么?为什么PPP不使用帧的编号?PPP适用于什么情况?为什么PPP协议不_接收方收到链路层数据后,使用crc检验后,余数为0,说明链路层的传输时可靠传输

软件测试工程师移民加拿大_无证移民,未受过软件工程师的教育(第1部分)-程序员宅基地

文章浏览阅读587次。软件测试工程师移民加拿大 无证移民,未受过软件工程师的教育(第1部分) (Undocumented Immigrant With No Education to Software Engineer(Part 1))Before I start, I want you to please bear with me on the way I write, I have very little gen...

随便推点

Thinkpad X250 secure boot failed 启动失败问题解决_安装完系统提示secureboot failure-程序员宅基地

文章浏览阅读304次。Thinkpad X250笔记本电脑,装的是FreeBSD,进入BIOS修改虚拟化配置(其后可能是误设置了安全开机),保存退出后系统无法启动,显示:secure boot failed ,把自己惊出一身冷汗,因为这台笔记本刚好还没开始做备份.....根据错误提示,到bios里面去找相关配置,在Security里面找到了Secure Boot选项,发现果然被设置为Enabled,将其修改为Disabled ,再开机,终于正常启动了。_安装完系统提示secureboot failure

C++如何做字符串分割(5种方法)_c++ 字符串分割-程序员宅基地

文章浏览阅读10w+次,点赞93次,收藏352次。1、用strtok函数进行字符串分割原型: char *strtok(char *str, const char *delim);功能:分解字符串为一组字符串。参数说明:str为要分解的字符串,delim为分隔符字符串。返回值:从str开头开始的一个个被分割的串。当没有被分割的串时则返回NULL。其它:strtok函数线程不安全,可以使用strtok_r替代。示例://借助strtok实现split#include <string.h>#include <stdio.h&_c++ 字符串分割

2013第四届蓝桥杯 C/C++本科A组 真题答案解析_2013年第四届c a组蓝桥杯省赛真题解答-程序员宅基地

文章浏览阅读2.3k次。1 .高斯日记 大数学家高斯有个好习惯:无论如何都要记日记。他的日记有个与众不同的地方,他从不注明年月日,而是用一个整数代替,比如:4210后来人们知道,那个整数就是日期,它表示那一天是高斯出生后的第几天。这或许也是个好习惯,它时时刻刻提醒着主人:日子又过去一天,还有多少时光可以用于浪费呢?高斯出生于:1777年4月30日。在高斯发现的一个重要定理的日记_2013年第四届c a组蓝桥杯省赛真题解答

基于供需算法优化的核极限学习机(KELM)分类算法-程序员宅基地

文章浏览阅读851次,点赞17次,收藏22次。摘要:本文利用供需算法对核极限学习机(KELM)进行优化,并用于分类。

metasploitable2渗透测试_metasploitable2怎么进入-程序员宅基地

文章浏览阅读1.1k次。一、系统弱密码登录1、在kali上执行命令行telnet 192.168.26.1292、Login和password都输入msfadmin3、登录成功,进入系统4、测试如下:二、MySQL弱密码登录:1、在kali上执行mysql –h 192.168.26.129 –u root2、登录成功,进入MySQL系统3、测试效果:三、PostgreSQL弱密码登录1、在Kali上执行psql -h 192.168.26.129 –U post..._metasploitable2怎么进入

Python学习之路:从入门到精通的指南_python人工智能开发从入门到精通pdf-程序员宅基地

文章浏览阅读257次。本文将为初学者提供Python学习的详细指南,从Python的历史、基础语法和数据类型到面向对象编程、模块和库的使用。通过本文,您将能够掌握Python编程的核心概念,为今后的编程学习和实践打下坚实基础。_python人工智能开发从入门到精通pdf