深度学习实践1--FashionMNIST分类_fashion mnist-程序员宅基地

目录

前言

随着人工智能的发展,深度学习也越发重要,目前深度学习可以应用到多方面,如图像处理领域、语音识别领域、自然语言处理邻域等。本篇是深度学习的入门篇,应用于图像分类。

一、深度学习是什么?

一般是指通过训练多层网络结构对未知数据进行分类或回归。

二、FashionMNIST分类

1.介绍数据集

Fashion-MNIST是Zalando的研究论文中提出的一个数据集,由包含60000个实例的训练集和包含10000个实例的测试集组成。每个实例包含一张28x28的灰度服饰图像和对应的类别标记(共有10类服饰,分别是:t-shirt(T恤),trouser(牛仔裤),pullover(套衫),dress(裙子),coat(外套),sandal(凉鞋),shirt(衬衫),sneaker(运动鞋),bag(包),ankle boot(短靴))。

2.获取数据集

使用torchvision.datasets.FashionMNIST获取内置数据集

import torchvision

from torchvision import transforms

# 将内置数据集的图片大小转为1*28*28后转化为tensor

# 可以对图片进行增广以增加精确度

train_transform = transforms.Compose([

transforms.Resize(28),

transforms.ToTensor()

])

# test_transform = transforms.Compose([])

train_data = torchvision.datasets.FashionMNIST(root='/data/FashionMNIST', train=True, download=True,

transform=train_transform)

test_data = torchvision.datasets.FashionMNIST(root='/data/FashionMNIST', train=False, download=True,

transform=train_transform)

print(len(train_data)) # 长度为60000

print(train_data[0])from torch.utils.data import Dataset

import pandas as pd

# 如果是自己的数据集需要自己构建dataset

class FashionMNISTDataset(Dataset):

def __init__(self, df, transform=None):

self.df = df

self.transform = transform

self.images = df.iloc[:, 1:].values.astype(np.uint8)

self.labels = df.iloc[:, 0].values

def __len__(self):

return len(self.images)

def __getitem__(self, idx):

image = self.images[idx].reshape(28, 28, 1)

label = int(self.labels[idx])

if self.transform is not None:

image = self.transform(image)

else:

image = torch.tensor(image / 255., dtype=torch.float)

label = torch.tensor(label, dtype=torch.long)

return image, label

train = pd.read_csv("../FashionMNIST/fashion-mnist_train.csv")

# train.head(10)

test = pd.read_csv("../FashionMNIST/fashion-mnist_test.csv")

# test.head(10)

print(len(test))

train_iter = FashionMNISTDataset(train, data_transform)

print(train_iter)

test_iter = FashionMNISTDataset(test, data_transform)3.分批迭代数据

调用DataLoader包迭代数据,shuffle用于打乱数据集,训练集需要打乱,测试集不用打乱

from torch.utils.data import DataLoader

batch_size = 256 # 迭代一批的大小(可以设置为其它的,一般用2**n)

num_workers = 4 # windows设置为0

train_iter = DataLoader(train_data, batch_size=batch_size, shuffle=True, num_workers=num_workers)

test_iter = DataLoader(test_data, batch_size=batch_size, shuffle=False, num_workers=num_workers)

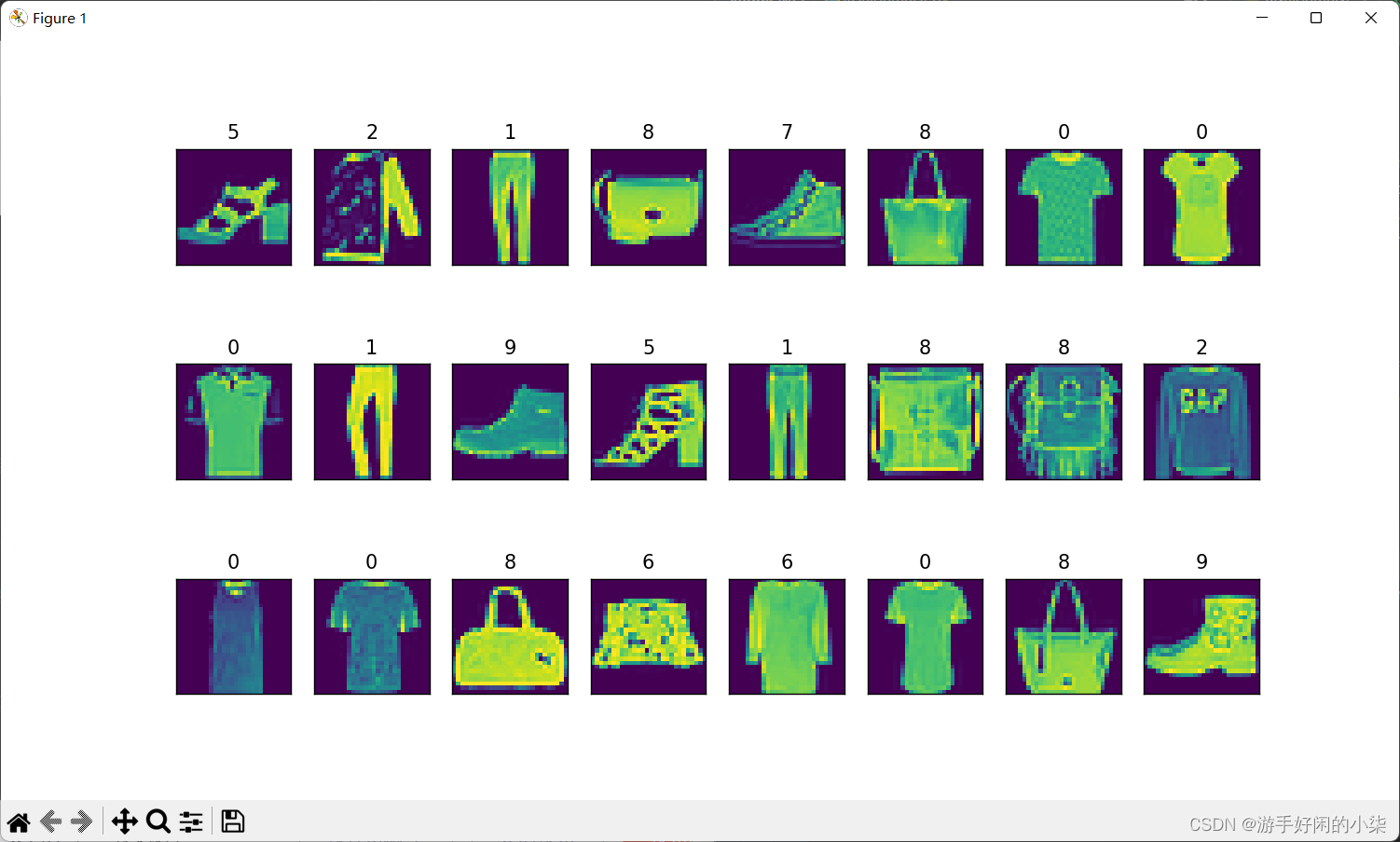

# print(len(train_iter)) # 2534.图片可视化

import matplotlib as plt

def show_images(imgs, num_rows, num_cols, targets, scale=1.5):

figsize = (num_cols * scale, num_rows * scale)

_, axes = plt.subplots(num_rows, num_cols, figsize=figsize)

axes = axes.flatten()

for ax, img, target in zip(axes, imgs, targets):

if torch.is_tensor(img):

# 图片张量

ax.imshow(img.numpy())

else:

# PIL

ax.imshow(img)

# 设置坐标轴不可见

ax.axes.get_xaxis().set_visible(False)

ax.axes.get_yaxis().set_visible(False)

plt.subplots_adjust(hspace=0.35)

ax.set_title('{}'.format(target))

return axes

# 将dataloader转换成迭代器才可以使用next方法

X, y = next(iter(train_iter))

show_images(X.squeeze(), 3, 8, targets=y)

plt.show()

5.构建网络模型

卷积->池化->激活->连接

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 64, 1, padding=1)

self.conv2 = nn.Conv2d(64, 64, 3, padding=1)

self.pool1 = nn.MaxPool2d(2, 2)

self.bn1 = nn.BatchNorm2d(64)

self.relu1 = nn.ReLU()

self.conv3 = nn.Conv2d(64, 128, 3, padding=1)

self.conv4 = nn.Conv2d(128, 128, 3, padding=1)

self.pool2 = nn.MaxPool2d(2, 2, padding=1)

self.bn2 = nn.BatchNorm2d(128)

self.relu2 = nn.ReLU()

self.fc5 = nn.Linear(128 * 8 * 8, 512)

self.drop1 = nn.Dropout() # Dropout可以比较有效的缓解过拟合的发生,在一定程度上达到正则化的效果。

self.fc6 = nn.Linear(512, 10)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.pool1(x)

x = self.bn1(x)

x = self.relu1(x)

x = self.conv3(x)

x = self.conv4(x)

x = self.pool2(x)

x = self.bn2(x)

x = self.relu2(x)

# print(" x shape ",x.size())

x = x.view(-1, 128 * 8 * 8)

x = F.relu(self.fc5(x))

x = self.drop1(x)

x = self.fc6(x)

return x

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = Net()

model = model.to(device)

print(model)

6.选择损失函数和优化器

import torch.optim as optim

criterion = nn.CrossEntropyLoss() # 交叉熵损失

optimizer = optim.Adam(model.parameters(), lr=0.001) # lr->learning rate学习率7.训练

def train(epoch):

model.train()

train_loss = 0

for data,label in train_iter:

data,label = data.cuda() ,label.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output,label)

loss.backward() # 反向计算

optimizer.step() # 优化

train_loss += loss.item()*data.size(0)

train_loss = train_loss/len(train_iter.dataset)

print('Epoch:{}\tTraining Loss:{:.6f}'.format(epoch+1, train_loss))

# train(1)8.验证

def val():

model.eval()

val_loss = 0

gt_labels = []

pred_labels = []

with torch.no_grad():

for data, label in test_iter:

data, label = data.cuda(), label.cuda()

output = model(data)

preds = torch.argmax(output, 1)

gt_labels.append(label.cpu().data.numpy())

pred_labels.append(preds.cpu().data.numpy())

gt_labels, pred_labels = np.concatenate(gt_labels), np.concatenate(pred_labels)

acc = np.sum(gt_labels == pred_labels) / len(pred_labels)

print(gt_labels,pred_labels)

print('Accuracy: {:6f}'.format(acc))

9.训练并验证

epochs = 20

for epoch in range(epochs):

train(epoch)

val()

torch.save(model,"mymmodel.pth") # 保存模型10.写成csv

用于提交比赛

# 写成csv

model = torch.load("mymodel.pth")

model = model.to(device)

id = 0

preds_list = []

with torch.no_grad():

for x, y in test_iter:

batch_pred = list(model(x.to(device)).argmax(dim=1).cpu().numpy())

for y_pred in batch_pred:

preds_list.append((id, y_pred))

id += 1

# print(batch_pred)

with open('result.csv', 'w') as f:

f.write('Id,Category\n')

for id, pred in preds_list:

f.write('{},{}\n'.format(id, pred))11.完整代码

import torch

import torchvision

from torchvision import transforms

from torch.utils.data import Dataset, DataLoader

import matplotlib.pyplot as plt

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import numpy as np

import pandas as pd

train_transform = transforms.Compose([

# transforms.ToPILImage(), # 这一步取决于后续的数据读取方式,如果使用内置数据集则不需要

transforms.Resize(28),

transforms.ToTensor()

])

# test_transform = transforms.Compose([

# transforms.ToTensor()

# ])

train_data = torchvision.datasets.FashionMNIST(root='/data/FashionMNIST', train=True, download=True,

transform=train_transform)

test_data = torchvision.datasets.FashionMNIST(root='/data/FashionMNIST', train=False, download=True,

transform=train_transform)

# print(test_data[0])

batch_size = 256

num_workers = 0

train_iter = DataLoader(train_data, batch_size=batch_size, shuffle=True, num_workers=num_workers)

test_iter = DataLoader(test_data, batch_size=batch_size, shuffle=False, num_workers=num_workers)

# # 如果是自己的数据集需要自己构建dataset

# class FashionMNISTDataset(Dataset):

# def __init__(self, df, transform=None):

# self.df = df

# self.transform = transform

# self.images = df.iloc[:, 1:].values.astype(np.uint8)

# self.labels = df.iloc[:, 0].values

#

# def __len__(self):

# return len(self.images)

#

# def __getitem__(self, idx):

# image = self.images[idx].reshape(28, 28, 1)

# label = int(self.labels[idx])

# if self.transform is not None:

# image = self.transform(image)

# else:

# image = torch.tensor(image / 255., dtype=torch.float)

# label = torch.tensor(label, dtype=torch.long)

# return image, label

#

#

# train = pd.read_csv("../FashionMNIST/fashion-mnist_train.csv")

# # train.head(10)

# test = pd.read_csv("../FashionMNIST/fashion-mnist_test.csv")

# # test.head(10)

# print(len(test))

# train_iter = FashionMNISTDataset(train, data_transform)

# print(train_iter)

# test_iter = FashionMNISTDataset(test, data_transform)

# print(train_iter)

def show_images(imgs, num_rows, num_cols, targets, scale=1.5):

"""Plot a list of images."""

figsize = (num_cols * scale, num_rows * scale)

_, axes = plt.subplots(num_rows, num_cols, figsize=figsize)

axes = axes.flatten()

for ax, img, target in zip(axes, imgs, targets):

if torch.is_tensor(img):

# 图片张量

ax.imshow(img.numpy())

else:

# PIL

ax.imshow(img)

# 设置坐标轴不可见

ax.axes.get_xaxis().set_visible(False)

ax.axes.get_yaxis().set_visible(False)

plt.subplots_adjust(hspace=0.35)

ax.set_title('{}'.format(target))

return axes

# 将dataloader转换成迭代器才可以使用next方法

# X, y = next(iter(train_iter))

# show_images(X.squeeze(), 3, 8, targets=y)

# plt.show()

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 64, 1, padding=1)

self.conv2 = nn.Conv2d(64, 64, 3, padding=1)

self.pool1 = nn.MaxPool2d(2, 2)

self.bn1 = nn.BatchNorm2d(64)

self.relu1 = nn.ReLU()

self.conv3 = nn.Conv2d(64, 128, 3, padding=1)

self.conv4 = nn.Conv2d(128, 128, 3, padding=1)

self.pool2 = nn.MaxPool2d(2, 2, padding=1)

self.bn2 = nn.BatchNorm2d(128)

self.relu2 = nn.ReLU()

self.fc5 = nn.Linear(128 * 8 * 8, 512)

self.drop1 = nn.Dropout()

self.fc6 = nn.Linear(512, 10)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.pool1(x)

x = self.bn1(x)

x = self.relu1(x)

x = self.conv3(x)

x = self.conv4(x)

x = self.pool2(x)

x = self.bn2(x)

x = self.relu2(x)

# print(" x shape ",x.size())

x = x.view(-1, 128 * 8 * 8)

x = F.relu(self.fc5(x))

x = self.drop1(x)

x = self.fc6(x)

return x

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = Net()

model = model.to(device)

# print(model)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

def train(epoch):

model.train()

train_loss = 0

train_loss_list = []

for data, label in train_iter:

data, label = data.cuda(), label.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output, label)

loss.backward()

optimizer.step()

train_loss += loss.item() * data.size(0)

train_loss = train_loss / len(train_iter.dataset)

train_loss_list.append(train_loss)

print('Epoch:{}\tTraining Loss:{:.6f}'.format(epoch + 1, train_loss))

def val():

model.eval()

gt_labels = []

pred_labels = []

acc_list = []

with torch.no_grad():

for data, label in test_iter:

data, label = data.cuda(), label.cuda()

output = model(data)

preds = torch.argmax(output, 1)

gt_labels.append(label.cpu().data.numpy())

pred_labels.append(preds.cpu().data.numpy())

gt_labels, pred_labels = np.concatenate(gt_labels), np.concatenate(pred_labels)

acc = np.sum(gt_labels == pred_labels) / len(pred_labels)

acc_list.append(acc)

print(gt_labels, pred_labels)

print('Accuracy: {:6f}'.format(acc))

epochs = 2

for epoch in range(epochs):

train(epoch)

val()

torch.save(model, "mymodel.pth")

# 写成csv

model = torch.load("mymodel.pth")

model = model.to(device)

id = 0

preds_list = []

with torch.no_grad():

for x, y in test_iter:

batch_pred = list(model(x.to(device)).argmax(dim=1).cpu().numpy())

for y_pred in batch_pred:

preds_list.append((id, y_pred))

id += 1

# print(preds_list)

with open('result_ljh.csv', 'w') as f:

f.write('Id,Category\n')

for id, pred in preds_list:

f.write('{},{}\n'.format(id, pred))

总结

里面还有许多可以改进的地方,如数据增广,调整超参数,卷积模型,调用多个GPU加快,同时还可以可视化损失及准确率。

智能推荐

C语言实现大数相乘运算_两个大数相乘c语音-程序员宅基地

文章浏览阅读1.3k次。本篇文章依然是有关TP2的内容。TP2主要思想:跳出整型浮点型的限制,定义新的容量比较大的数据类型,从而实现一些大数运算看了一些网上的算法和代码,也从前辈文章里得到一些灵感,产出一个用C语言实现大数相乘的算法废话不多说,直接上算法和代码t_EntierLong multiplication(t_EntierLong n1,t_EntierLong n2){ int i,j,m,c;//m是进位变量 t_EntierLong result; result.Negatif=f_两个大数相乘c语音

linux基本命令操作(一)_将搜索结果输出到用户主目录下的my.txt文件-程序员宅基地

文章浏览阅读333次。本文来自民工哥微信上的文章1.ls [选项] [目录名 | 列出相关目录下的所有目录和文件-a 列出包括.a开头的隐藏文件的所有文件-A 通-a,但不列出"."和".."-l 列出文件的详细信息-c 根据ctime排序显示-t 根据文件修改时间排序---color[=WHEN] 用色彩辨别文件类型 WHEN 可以是'never'、'always'或'auto'其中之一..._将搜索结果输出到用户主目录下的my.txt文件

预编译指令与宏定义-程序员宅基地

文章浏览阅读152次。#if #elif [defined(), !defined()] #else #ifdef #ifndef #endif // 条件编译/* 头文件防止多次被包含 */#ifndef ZLIB_H#define ZLIB_H#endif /* ZLIB_H *//* 用C方式来修饰函数与变量 */#ifdef __cplusplus..._预处理指令或编译器优化

【Python学习】Python的点滴积累_python怎么输出deff-程序员宅基地

文章浏览阅读347次。点滴积累一、Python中的map()函数与lambda()函数1.1 map()函数1.2 lambda()函数一、Python中的map()函数与lambda()函数1.1 map()函数用法:map(function, iterable, …)参数function: 传的是一个函数名,可以是python内置的,也可以是自定义的。参数iterable: 传的是一个可以迭代的对象,例如列表,元组,字符串…功能: 将iterable中的每一个元素执行一遍function例子一:例如,_python怎么输出deff

Android应用开发_andruid移动应用开发csdn-程序员宅基地

文章浏览阅读892次。随着智能手机和移动设备的日益普及,Android应用开发已经成为了一个越来越重要的领域。无论是个人还是企业,在这种趋势下都需要找到一种使用者可以轻松访问的方式展示他们的品牌或产品。同时,Android应用也成为了人们生活中必不可少的一部分,如社交媒体、在线购物、移动支付等。作为一个Android应用开发者,你需要对于Java和Kotlin编程语言有深入的了解,同时还需要学会如何使用工具集例如Android SDK进行开发,并能够理解Activity组件的生命周期和UI设计的布局。_andruid移动应用开发csdn

windows安装多版本JDK_windows安装多个jdk-程序员宅基地

文章浏览阅读4.4k次,点赞3次,收藏16次。windows安装jdk多版本安装并动态切换_windows安装多个jdk

随便推点

2023 最新版navicat 下载与安装 步骤及演示 (图示版)_navicat下载-程序员宅基地

文章浏览阅读7.5w次,点赞37次,收藏222次。Navicat 是一款功能强大的数据库管理工具,可支持多种数据库类型,如 MySQL、Oracle、SQLite、Redis、PostgreSQL 等等。随着数据管理的重要性越来越受到重视,Navicat 的使用率也开始逐渐上升。本文将为您详细介绍 2023 最新版 Navicat 的下载、安装和基本使用方法。通过图文详解,帮助您轻松上手使用 Navicat,提高数据库管理的效率。_navicat下载

element table数据量太大导致网页卡死崩溃_element表格数据多了卡-程序员宅基地

文章浏览阅读6.2k次。elementUI中的表格组件el-table性能不优,数据量大的时候,尤其是可操作表格,及其容易卡顿。_element表格数据多了卡

每日词根——vad(走)_vad的词源-程序员宅基地

文章浏览阅读1.5k次。身体和灵魂总有一个在路上vad,vas(wad) = to go来自拉丁语的vad,vas意为to go。变形为wad,同义词根有来自拉丁语的ced/cess(ceed,ceas),grad/gred/gress,it 和算来希腊语的bat(bet,bit)以及来自盎格鲁-撒克逊语的fare。(*拉丁文vadere(=to go)——英文字根字典)1.pervade (这儿、那儿,一一走遍per(=through,throughout) + vad(=go))vt.遍及;弥漫于,渗透于per_vad的词源

python | 关于计算机内小数不精确的问题_python小数乘法有误差-程序员宅基地

文章浏览阅读3.1k次,点赞2次,收藏6次。原因解释:浮点数(小数)在计算机中实际是以二进制存储的,并不精确。比如0.1是十进制,转换为二进制后就是一个无限循环的数:0.00011001100110011001100110011001100110011001100110011001100python是以双精度(64bit)来保存浮点数的,后面多余的会被砍掉,所以在电脑上实际保存的已经小于0.1的值了,后面拿来参与运算就产生了误差。解决办法:使用decimal库python中的decimal模块可以解决上面的烦恼decima._python小数乘法有误差

kylin2.3作业将结果写入hbase时报错TableNotFoundException_kylin 元数据中显示不存在的表-程序员宅基地

文章浏览阅读4.1k次。执行kylin作业报错,这个作业是要把运行结果写入到hbase的表里的,但是再写入hbase过程中报错hbase中没有表 'kylin_metadata'。错误日志摘要——2018-05-07 20:28:46,137 WARN [main] util.HeapMemorySizeUtil:55 : hbase.regionserver.global.memstore.upperLimit is..._kylin 元数据中显示不存在的表

get请求和post请求的区别(简洁易懂)-程序员宅基地

文章浏览阅读2.5w次,点赞48次,收藏332次。get和post的区别_get请求和post请求