运行 MapReduce 样例_hadoop-mapreduce-examples-*.jar-程序员宅基地

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar

An example program must be given as the first argument.

Valid program names are:

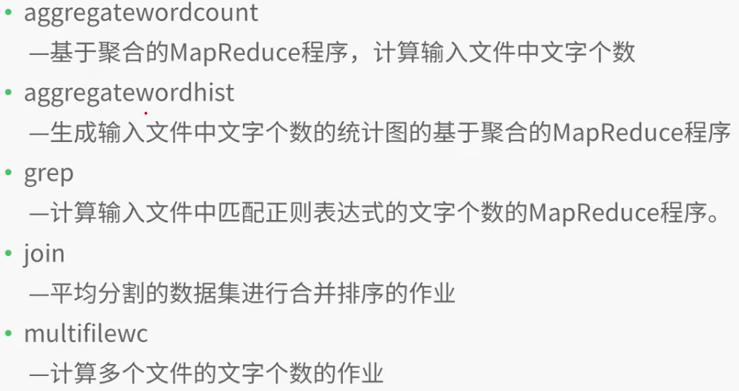

aggregatewordcount: An Aggregate based map/reduce program that counts the words in the input files.

aggregatewordhist: An Aggregate based map/reduce program that computes the histogram of the words in the input files.

bbp: A map/reduce program that uses Bailey-Borwein-Plouffe to compute exact digits of Pi.

dbcount: An example job that count the pageview counts from a database.

distbbp: A map/reduce program that uses a BBP-type formula to compute exact bits of Pi.

grep: A map/reduce program that counts the matches of a regex in the input.

join: A job that effects a join over sorted, equally partitioned datasets

multifilewc: A job that counts words from several files.

pentomino: A map/reduce tile laying program to find solutions to pentomino problems.

pi: A map/reduce program that estimates Pi using a quasi-Monte Carlo method.

randomtextwriter: A map/reduce program that writes 10GB of random textual data per node.

randomwriter: A map/reduce program that writes 10GB of random data per node.

secondarysort: An example defining a secondary sort to the reduce.

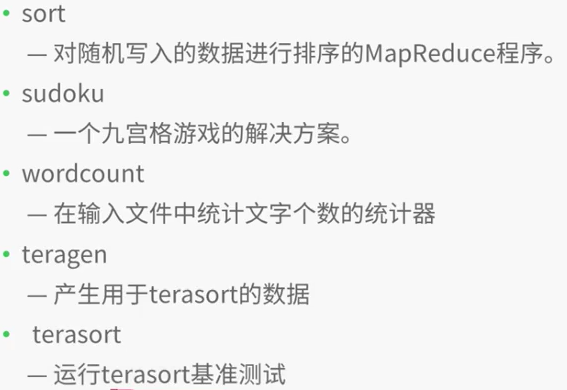

sort: A map/reduce program that sorts the data written by the random writer.

sudoku: A sudoku solver.

teragen: Generate data for the terasort

terasort: Run the terasort

teravalidate: Checking results of terasort

wordcount: A map/reduce program that counts the words in the input files.

wordmean: A map/reduce program that counts the average length of the words in the input files.

wordmedian: A map/reduce program that counts the median length of the words in the input files.

wordstandarddeviation: A map/reduce program that counts the standard deviation of the length of the words in the input files.

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar wordcount

Usage: wordcount <in> [<in>...] <out>

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar pi

Usage: org.apache.hadoop.examples.QuasiMonteCarlo <nMaps> <nSamples>

Generic options supported are

-conf <configuration file> specify an application configuration file

-D <property=value> use value for given property

-fs <local|namenode:port> specify a namenode

-jt <local|resourcemanager:port> specify a ResourceManager

-files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster

-libjars <comma separated list of jars> specify comma separated jar files to include in the classpath.

-archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines.

The general command line syntax is

bin/hadoop command [genericOptions] [commandOptions][root@master hadoop-2.7.4]# jps

4912 NameNode

9265 NodeManager

9155 ResourceManager

9561 Jps

5195 SecondaryNameNode

5038 DataNode

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar wordcount /input /output2

17/12/17 16:28:33 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

17/12/17 16:28:35 INFO input.FileInputFormat: Total input paths to process : 1

17/12/17 16:28:35 INFO mapreduce.JobSubmitter: number of splits:1

17/12/17 16:28:35 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1513499297109_0001

17/12/17 16:28:36 INFO impl.YarnClientImpl: Submitted application application_1513499297109_0001

17/12/17 16:28:37 INFO mapreduce.Job: The url to track the job: http://centos:8088/proxy/application_1513499297109_0001/

17/12/17 16:28:37 INFO mapreduce.Job: Running job: job_1513499297109_0001

17/12/17 16:29:06 INFO mapreduce.Job: Job job_1513499297109_0001 running in uber mode : false

17/12/17 16:29:06 INFO mapreduce.Job: map 0% reduce 0%

17/12/17 16:29:25 INFO mapreduce.Job: map 100% reduce 0%

17/12/17 16:29:40 INFO mapreduce.Job: map 100% reduce 100%

17/12/17 16:29:41 INFO mapreduce.Job: Job job_1513499297109_0001 completed successfully

17/12/17 16:29:42 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=339

FILE: Number of bytes written=242217

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=267

HDFS: Number of bytes written=217

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=16910

Total time spent by all reduces in occupied slots (ms)=9673

Total time spent by all map tasks (ms)=16910

Total time spent by all reduce tasks (ms)=9673

Total vcore-milliseconds taken by all map tasks=16910

Total vcore-milliseconds taken by all reduce tasks=9673

Total megabyte-milliseconds taken by all map tasks=17315840

Total megabyte-milliseconds taken by all reduce tasks=9905152

Map-Reduce Framework

Map input records=4

Map output records=31

Map output bytes=295

Map output materialized bytes=339

Input split bytes=95

Combine input records=31

Combine output records=29

Reduce input groups=29

Reduce shuffle bytes=339

Reduce input records=29

Reduce output records=29

Spilled Records=58

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=166

CPU time spent (ms)=1380

Physical memory (bytes) snapshot=279044096

Virtual memory (bytes) snapshot=4160716800

Total committed heap usage (bytes)=138969088

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=172

File Output Format Counters

Bytes Written=217

[root@master hadoop-2.7.4]# ./bin/hdfs dfs -ls /output2/

Found 2 items

-rw-r--r-- 1 root supergroup 0 2017-12-17 16:29 /output2/_SUCCESS

-rw-r--r-- 1 root supergroup 217 2017-12-17 16:29 /output2/part-r-00000

[root@master hadoop-2.7.4]# ./bin/hdfs dfs -cat /output2/part-r-00000

78 1

ai 1

daokc 1

dfksdhlsd 1

dkhgf 1

docke 1

docker 1

erhejd 1

fdjk 1

fdskre 1

fjdk 1

fjdks 1

fjksl 1

fsd 1

go 1

haddop 1

hello 3

hi 1

hki 1

jfdk 1

scalw 1

sd 1

sdkf 1

sdkfj 1

sdl 1

sstem 1

woekd 1

yfdskt 1

yuihej 1

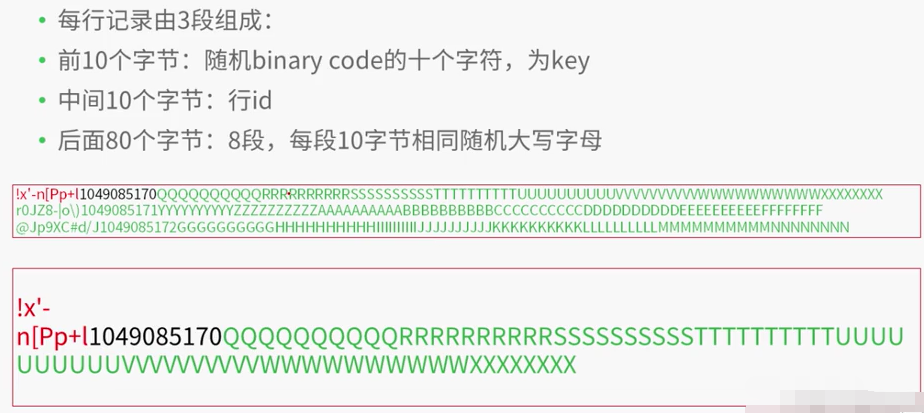

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar teragen

teragen <num rows> <output dir>

[root@master hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar teragen 10000 /teragen

17/12/17 16:36:48 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

17/12/17 16:36:49 INFO terasort.TeraSort: Generating 10000 using 2

17/12/17 16:36:50 INFO mapreduce.JobSubmitter: number of splits:2

17/12/17 16:36:50 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1513499297109_0002

17/12/17 16:36:50 INFO impl.YarnClientImpl: Submitted application application_1513499297109_0002

17/12/17 16:36:50 INFO mapreduce.Job: The url to track the job: http://centos:8088/proxy/application_1513499297109_0002/

17/12/17 16:36:50 INFO mapreduce.Job: Running job: job_1513499297109_0002

17/12/17 16:37:01 INFO mapreduce.Job: Job job_1513499297109_0002 running in uber mode : false

17/12/17 16:37:01 INFO mapreduce.Job: map 0% reduce 0%

17/12/17 16:37:19 INFO mapreduce.Job: map 100% reduce 0%

17/12/17 16:37:21 INFO mapreduce.Job: Job job_1513499297109_0002 completed successfully

17/12/17 16:37:21 INFO mapreduce.Job: Counters: 31

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=240922

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=164

HDFS: Number of bytes written=1000000

HDFS: Number of read operations=8

HDFS: Number of large read operations=0

HDFS: Number of write operations=4

Job Counters

Launched map tasks=2

Other local map tasks=2

Total time spent by all maps in occupied slots (ms)=30146

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=30146

Total vcore-milliseconds taken by all map tasks=30146

Total megabyte-milliseconds taken by all map tasks=30869504

Map-Reduce Framework

Map input records=10000

Map output records=10000

Input split bytes=164

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=434

CPU time spent (ms)=1400

Physical memory (bytes) snapshot=161800192

Virtual memory (bytes) snapshot=4156805120

Total committed heap usage (bytes)=35074048

org.apache.hadoop.examples.terasort.TeraGen$Counters

CHECKSUM=21555350172850

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=1000000

[root@master hadoop-2.7.4]# ./bin/hdfs dfs -ls /teragen

Found 3 items

-rw-r--r-- 1 root supergroup 0 2017-12-17 16:37 /teragen/_SUCCESS

-rw-r--r-- 1 root supergroup 500000 2017-12-17 16:37 /teragen/part-m-00000

-rw-r--r-- 1 root supergroup 500000 2017-12-17 16:37 /teragen/part-m-00001[root@centos hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar terasort /teragen /terasort

17/12/17 16:46:24 INFO terasort.TeraSort: starting

17/12/17 16:46:25 INFO input.FileInputFormat: Total input paths to process : 2

Spent 135ms computing base-splits.

Spent 3ms computing TeraScheduler splits.

Computing input splits took 139ms

Sampling 2 splits of 2

Making 1 from 10000 sampled records

Computing parititions took 384ms

Spent 530ms computing partitions.

17/12/17 16:46:26 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

17/12/17 16:46:27 INFO mapreduce.JobSubmitter: number of splits:2

17/12/17 16:46:27 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1513499297109_0003

17/12/17 16:46:28 INFO impl.YarnClientImpl: Submitted application application_1513499297109_0003

17/12/17 16:46:28 INFO mapreduce.Job: The url to track the job: http://centos:8088/proxy/application_1513499297109_0003/

17/12/17 16:46:28 INFO mapreduce.Job: Running job: job_1513499297109_0003

17/12/17 16:46:38 INFO mapreduce.Job: Job job_1513499297109_0003 running in uber mode : false

17/12/17 16:46:38 INFO mapreduce.Job: map 0% reduce 0%

17/12/17 16:47:19 INFO mapreduce.Job: map 100% reduce 0%

17/12/17 16:47:41 INFO mapreduce.Job: map 100% reduce 100%

17/12/17 16:47:44 INFO mapreduce.Job: Job job_1513499297109_0003 completed successfully

17/12/17 16:47:45 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=1040006

FILE: Number of bytes written=2445488

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=1000208

HDFS: Number of bytes written=1000000

HDFS: Number of read operations=9

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=2

Launched reduce tasks=1

Data-local map tasks=2

Total time spent by all maps in occupied slots (ms)=87622

Total time spent by all reduces in occupied slots (ms)=12795

Total time spent by all map tasks (ms)=87622

Total time spent by all reduce tasks (ms)=12795

Total vcore-milliseconds taken by all map tasks=87622

Total vcore-milliseconds taken by all reduce tasks=12795

Total megabyte-milliseconds taken by all map tasks=89724928

Total megabyte-milliseconds taken by all reduce tasks=13102080

Map-Reduce Framework

Map input records=10000

Map output records=10000

Map output bytes=1020000

Map output materialized bytes=1040012

Input split bytes=208

Combine input records=0

Combine output records=0

Reduce input groups=10000

Reduce shuffle bytes=1040012

Reduce input records=10000

Reduce output records=10000

Spilled Records=20000

Shuffled Maps =2

Failed Shuffles=0

Merged Map outputs=2

GC time elapsed (ms)=3246

CPU time spent (ms)=3580

Physical memory (bytes) snapshot=400408576

Virtual memory (bytes) snapshot=6236995584

Total committed heap usage (bytes)=262987776

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=1000000

File Output Format Counters

Bytes Written=1000000

17/12/17 16:47:45 INFO terasort.TeraSort: done

[root@centos hadoop-2.7.4]# ./bin/hdfs dfs -ls /terasort

Found 3 items

-rw-r--r-- 1 root supergroup 0 2017-12-17 16:47 /terasort/_SUCCESS

-rw-r--r-- 10 root supergroup 0 2017-12-17 16:46 /terasort/_partition.lst

-rw-r--r-- 1 root supergroup 1000000 2017-12-17 16:47 /terasort/part-r-00000[root@centos hadoop-2.7.4]# ./bin/yarn jar /opt/hadoop-2.7.4/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar teravalidate /terasort /report

17/12/17 17:03:46 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

17/12/17 17:03:48 INFO input.FileInputFormat: Total input paths to process : 1

Spent 56ms computing base-splits.

Spent 3ms computing TeraScheduler splits.

17/12/17 17:03:48 INFO mapreduce.JobSubmitter: number of splits:1

17/12/17 17:03:49 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1513499297109_0007

17/12/17 17:03:49 INFO impl.YarnClientImpl: Submitted application application_1513499297109_0007

17/12/17 17:03:49 INFO mapreduce.Job: The url to track the job: http://centos:8088/proxy/application_1513499297109_0007/

17/12/17 17:03:49 INFO mapreduce.Job: Running job: job_1513499297109_0007

17/12/17 17:04:00 INFO mapreduce.Job: Job job_1513499297109_0007 running in uber mode : false

17/12/17 17:04:00 INFO mapreduce.Job: map 0% reduce 0%

17/12/17 17:04:08 INFO mapreduce.Job: map 100% reduce 0%

17/12/17 17:04:19 INFO mapreduce.Job: map 100% reduce 100%

17/12/17 17:04:20 INFO mapreduce.Job: Job job_1513499297109_0007 completed successfully

17/12/17 17:04:20 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=92

FILE: Number of bytes written=241805

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=1000105

HDFS: Number of bytes written=22

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=4952

Total time spent by all reduces in occupied slots (ms)=8032

Total time spent by all map tasks (ms)=4952

Total time spent by all reduce tasks (ms)=8032

Total vcore-milliseconds taken by all map tasks=4952

Total vcore-milliseconds taken by all reduce tasks=8032

Total megabyte-milliseconds taken by all map tasks=5070848

Total megabyte-milliseconds taken by all reduce tasks=8224768

Map-Reduce Framework

Map input records=10000

Map output records=3

Map output bytes=80

Map output materialized bytes=92

Input split bytes=105

Combine input records=0

Combine output records=0

Reduce input groups=3

Reduce shuffle bytes=92

Reduce input records=3

Reduce output records=1

Spilled Records=6

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=193

CPU time spent (ms)=1250

Physical memory (bytes) snapshot=281731072

Virtual memory (bytes) snapshot=4160716800

Total committed heap usage (bytes)=139284480

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=1000000

File Output Format Counters

Bytes Written=22

[root@centos hadoop-2.7.4]# ./bin/hdfs dfs -ls /report

Found 2 items

-rw-r--r-- 1 root supergroup 0 2017-12-17 17:04 /report/_SUCCESS

-rw-r--r-- 1 root supergroup 22 2017-12-17 17:04 /report/part-r-00000

[root@centos hadoop-2.7.4]# ./bin/hdfs dfs -cat /report/part-r-00000

checksum 139abefd74b2

智能推荐

C语言——数组逆置(内含递归实现)-程序员宅基地

文章浏览阅读5k次,点赞5次,收藏25次。一.什么是数组的逆置呢?int a[10]={1,2,3,4,5,6,7,8,9,10};将数组变为 a[10]={10,9,8,7,6,5,4,3,2,1};这就叫做数组的逆置。二.1.循环实现数组的逆置这个是我们在初学C语言时最容易的实现方法!a.通过for循环实现//通过循环完成对数组的逆置#include<stdio.h>#define size 10void Inversion(int[], int);int main(void){ i_数组逆置

esp32-cam Thonny 烧录以及通信-程序员宅基地

文章浏览阅读229次,点赞4次,收藏3次。链接:https://pan.baidu.com/s/1cBsrCJ_TATFsuVhVdr0VmA?IO1和GND不再短接。重新插拔一下,就可以了。

字符,字节和编码-程序员宅基地

文章浏览阅读39次。级别:中级摘要:本文介绍了字符与编码的发展过程,相关概念的正确理解。举例说明了一些实际应用中,编码的实现方法。然后,本文讲述了通常对字符与编码的几种误解,由于这些误解而导致乱码产生的原因,以及消除乱码的办法。本文的内容涵盖了“中文问题”,“乱码问题”。掌握编码问题的关键是正确地理解相关概念,编码所涉及的技术其实是很简单的。因此,阅读本文时需要慢读多想,多思考。引言“字符与编码”...

Linux 修改 ELF 解决 glibc 兼容性问题_glibc_private-程序员宅基地

文章浏览阅读1.1k次。Linux glibc 问题相信有不少 Linux 用户都碰到过运行第三方(非系统自带软件源)发布的程序时的 glibc 兼容性问题,这一般是由于当前 Linux 系统上的 GNU C 库(glibc)版本比较老导致的,例如我在 CentOS 6 64 位系统上运行某第三方闭源软件时会报:[root@centos6-dev ~]# ldd tester./tester: /lib64/libc.so.6: version `GLIBC_2.17' not found (required by._glibc_private

wxWidgets:常用表达式_wxwidget 正则表达式 非数字字符-程序员宅基地

文章浏览阅读282次。wxWidgets:常用表达式wxWidgets:常用表达式不同风味的正则表达式转义Escapes元语法匹配限制和兼容性基本正则表达式正则表达式字符名称wxWidgets:常用表达式一个正则表达式描述字符的字符串。这是一种匹配某些字符串但不匹配其他字符串的模式。不同风味的正则表达式POSIX 定义的正则表达式 (RE) 有两种形式:扩展正则表达式(ERE) 和基本正则表达式(BRE)。ERE 大致是传统egrep 的那些,而 BRE 大致是传统ed 的那些。这个实现增加了第三种风格:高级正则表达式_wxwidget 正则表达式 非数字字符

Java中普通for循环和增强for循环的对比_for循环10万数据需要时间-程序员宅基地

文章浏览阅读3.4k次,点赞5次,收藏11次。Java中普通for循环和增强for循环的对比_for循环10万数据需要时间

随便推点

话题的发布与订阅_话题订阅频率和发布频率一样-程序员宅基地

文章浏览阅读2.6k次,点赞3次,收藏11次。Ros话题发布与订阅节点的编写(C++)_话题订阅频率和发布频率一样

Qt Creator 安装 VLD_qtcreater vld-程序员宅基地

文章浏览阅读509次。Qt Creator 安装 VLD2015-04-14 16:52:55你好L阅读数 2325更多分类专栏:qt版权声明:本文为博主原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。本文链接:https://blog.csdn.net/lin_jianbin/article/details/45044459一、环境说明1、VLD内存..._qtcreater vld

Linux 开发环境工具[zt]-程序员宅基地

文章浏览阅读120次。软件集成开发环境(代码编辑、浏览、编译、调试)Emacs http://www.gnu.org/software/emacs/Source-Navigator 5.2b2 http://sourceforge.net/projects/sourcenavAnjuta http://anjuta.sourceforge...._linux上安装flawfinder

java小易——Spring_spring的beanfactory是hashmap吗-程序员宅基地

文章浏览阅读109次。SpringIoC DI AOPspring底层用的是ConcurrentHashMap解耦合:工厂模式:需要一个模板控制反转 IoC将原来有动作发起者(Main)控制创建对象的行为改成由中间的工厂来创建对象的行为的过程叫做IoC一个类与工厂之间如果Ioc以后,这个时候,动作发起者(Main)已经不能明确的知道自己获得到的对象,是不是自己想要的对象了,因为这个对象的创建的权利与交给我这个对象的权利全部转移到了工厂上了所用包:DOM4j解析XML文件lazy-init = _spring的beanfactory是hashmap吗

温故而知新:部分常见的图像数学运算处理算法的用途_图像处理算啊-程序员宅基地

文章浏览阅读1.3k次,点赞29次,收藏24次。本文将图像处理中常用的数学运算算法及其对图像的作用做了个汇总介绍,有助于图像处理时针对对应场景快速选择合适的数学算法。_图像处理算啊

EM Agent Fatal agent error: State Manager failed at Startup_check agent status retcode=1-程序员宅基地

文章浏览阅读1.1k次。EM 不定期异常宕机,问题重复出现,之前几次因为忙于其它事,无力兼顾,等回头处理时,发现EM已恢复正常。这次问题又重现,准备彻底解决,过程如下:1. 重新启动EM失败,报错:/u01/oracle/agent/core/12.1.0.5.0/bin/emctl status agentOracle Enterprise Manager Cloud Control 12c Relea_check agent status retcode=1